Fighting online trolls with bots

Reposted from the conversation. https://theconversation.com/fighting-online-trolls-with-bots-70941

The wonder of internet connectivity can turn into a horror show

if the people who use online platforms decide that instead of connecting

and communicating, they want to mock, insult, abuse, harass and even

threaten each other. In online communities since at least the early 1990s, this has been called “trolling.” More recently it has been called cyberbullying.

It happens on many different websites and social media systems. Users

have been fighting back for a while, and now the owners and managers of

those online services are joining in.

The most recent addition to this effort comes from Twitch, one of a few increasingly popular platforms

that allow gamers to play video games, stream their gameplay live

online and type back and forth with people who want to watch them play.

Players do this to show off their prowess (and in some cases make money). Game fans do this for entertainment or to learn new tips and tricks that can improve their own play.

Large, diverse groups of people engaging with each other online can yield interesting cooperation. For example, in one video game I helped build, people watching a stream could make comments that would actually give the player help, like slowing down or attacking enemies. But of the thousands of people tuning in daily to watch gamer Sebastian “Forsen” Fors play, for instance, at least some try to overwhelm or hijack the chat away from the subject of the game itself. This can be a mere nuisance, but can also become a serious problem, with racism, sexism and other prejudices coming to the fore in toxic and abusive comment threads.

In an effort to help its users fight trolling, Twitch has developed bots – software programs that can run automatically on its platform – to monitor discussions in its chats. At present, Twitch’s bots alert the game’s host, called the streamer, that someone has posted an offensive word. The streamer can then decide what action to take, such as blocking the user from the channel.

Trolls can share pornographic images in a chat channel, instead of having conversations about the game. Chelly Con Carne/YouTube, CC BY-NDBeyond just helping individual streamers manage their audiences’

behavior, this approach may be able to capitalize on the fact that online bots can help change people’s behavior, as my own research has documented. For instance, a bot could approach people using racist language, question them about being racist and suggest other forms of interaction to change how people interact with others.

Using bots to affect humans

In 2015 I was part of a team that created a system that uses Twitter bots to do the activist work of recruiting humans to do social good for their community. We called it Botivist.

We used Botivist in an experiment to find out whether bots could recruit and make people contribute ideas about tackling corruption instead of just complaining about corruption. We set up the system to watch Twitter for people complaining about corruption in Latin America, identifying the keywords “corrupcion” and “impunidad,” the Spanish words for “corruption” and “impunity.”

When it noticed relevant tweets, Botivist would tweet in reply, asking questions like “How do we fight corruption in our cities?” and “What should we change personally to fight corruption?” Then it waited to see if the people replied, and what they said. Of those who engaged, Botivist asked follow-up questions and asked them to volunteer to help fight the problem they were complaining about.

We found that Botivist was able to encourage people to go beyond simply complaining about corruption, pushing them to offer ideas and engage with others sharing their concerns. Bots could change people’s behavior! However, we also found that some individuals began debating whether – and how – bots should be involved in activism. But it nevertheless suggests that people who were comfortable engaging with bots online could be mobilized to work toward a solution, rather than just complaining about it.

Humans’ reactions to bots’ interventions matter, and inform how we design bots and what we tell them to do. In research at New York University in 2016, doctoral student Kevin Munger used Twitter bots to engage with people expressing racist views online. Calling out Twitter users for racist behavior ended up reducing those users’ racist communications over time – if the bot doing the chastising appeared to be a white man with a large number of followers, two factors that conferred social status and power. If the bot had relatively few followers or was a black man, its interventions were not measurably successful.

Raising additional questions

Bots’ abilities to affect how people act toward each other online brings up important issues our society needs to address. A key question is: What types of behaviors should bots encourage or discourage?

It’s relatively benign for bots to notify humans about specifically hateful or dangerous words – and let the humans decide what to do about it. Twitch lets streamers decide for themselves whether they want to use the bots, as well as what (if anything) to do if the bot alerts them to a problem. Users’ decisions not to use the bots include both technological factors and concerns about comments. In conversations I have seen among Twitch streamers, some have described disabling them for causing interference with browser add-ons they already use to manage their audience chat space. Other streamers have disabled the bots because they feel bots hinder audience participation.

But it could be alarming if we ask bots to influence people’s free expression of genuine feelings or thoughts. Should bots monitor language use on all online platforms? What should these “bot police” look out for? How should the bots – which is to say, how should the people who design the bots – handle those Twitch streamers who appear to enjoy engaging with trolls?

One Twitch streamer posted a positive view of trolls on Reddit:

“…lmfao! Trolls make it interesting […] I sometimes troll back if I’m in a really good mood […] I get similar comments all of the time…sometimes I laugh hysterically and lose focus because I’m tickled…”

Other streamers even enjoy sharing their witty replies to trolls:

“…My favorite was someone telling me in Rocket League "I hope every one of your followers unfollows you after that match.” My response was “My mom would never do that!” Lol…"

What about streamers who actually want to make racist or sexist comments to their audiences? What if their audiences respond positively to those remarks? Should a bot monitor a player’s behavior on his own channel against standards set by someone else, such as the platform’s administrators? And what language should the bots watch for – racism, perhaps, but what about ideas that are merely unpopular, rather than socially damaging?

At present, we don’t have ways of thinking about, talking about or deciding on these balancing acts of freedom of expression and association online. In the offline world, people are free to say racist things to willing audiences, but suffer social consequences if they do so around people who object. As bots become more able to participate in, and exert influence on, our human interactions, we’ll need to decide who sets the standards and how, as well as who enforces them, in online communities.

Savvy social media strategies boost anti-establishment political wins

Reposted from the conversation. https://theconversation.com/savvy-social-media-strategies-boost-anti-establishment-political-wins-98670

By Saiph Savage, Claudia Flores-Saviaga

Disclosure statement

The authors do not work for, consult, own shares in or

receive funding from any company or organisation that would benefit from

this article, and have disclosed no relevant affiliations beyond their

academic appointment.

Mexico’s anti-establishment presidential candidate, Andrés Manuel López Obrador, faced opposition from the mainstream media. And he spent 13 percent less on advertising than his opponents. Yet the man commonly known by his initials as “AMLO” went on to win the Mexican presidency in a landslide with over 53 percent of the vote in a four-way race in July.

That remarkable victory was at least partly due to the social media strategies of the political activists who backed him. Similar strategies appeared in the 2016 U.S. presidential election and the 2017 French presidential race.

Our lab has been analyzing these social media activities

to understand how they’ve worked to threaten – and topple –

establishment candidates. By analyzing more than 6 million posts from

Reddit, Facebook and Twitter, we identified three main online

strategies: using activist slang, attempting to “go viral” and providing

historical context.

Redditors' responses to information strategies

In our study of activity in a key Trump-supporting subreddit, citizens tended to engage most with posts that explained the political ecosystem to them, commenting more on them and giving more upvotes of support.

Some of these strategies might simply be online adaptations of long-standing strategies used in traditional offline campaigning. But others seem to be new ways of connecting and driving people to the polls. Our lab was interested in understanding the dynamics behind these online activists in greater detail, especially as some had crossed over from being merely supporters – even anonymous ones – not formally affiliated with campaigns, to being officially incorporated in campaign teams.

Integrating activist slang

Some political activists pointedly used slang in their online conversations, creating a dynamic that elevated their candidate as an opponent of the status quo. Trump backers, for instance, called themselves “Deplorables,” supporting “the God Emperor” Trump against “Killary” Clinton.

AMLO backers called themselves “AMLOVERS” or “Chairos,” and had nicknames for his opponents, such as calling the other presidential candidate, Ricardo Anaya, “Ricky Riquin Canayin” – Spanish for “The Despicable Richy Rich.”

Efforts to ‘go viral’

Some political activists worked hard to identify the material that was most likely to attract wide attention online and get media coverage. Trump backers, for instance, organized on the Discord chat service and Reddit forums to see which variations of edited images of Hillary Clinton were most likely to get shared and go viral. They became so good at getting attention for their posts that Reddit actually changed its algorithm to stop Trump backers from filling up the site’s front page with pro-Trump propaganda.

Similarly, AMLO backers were able to keep pro-AMLO hashtags trending on Twitter, such as #AMLOmania, in which people across Mexico made promises of what they would do for the country if AMLO won. The vows ranged from free beer and food in restaurants to free legal advice.

For instance, an artist promised to paint an entire rural school in Veracruz, Mexico, if AMLO won. A law firm promised to waive its fees for 100 divorces and alimony lawsuits if AMLO won. The goal of citizen activists was to motivate others to support AMLO, while doing positive things for their country.

The historian-style activists

Some anti-establishment activists were able to recruit more supporters by providing detailed explanations of the political system as they saw it. Trump backers, for instance, created electronic manuals advising supporters how to explain their viewpoint to opponents to get them to switch sides. They compiled the top WikiLeaks revelations about Hillary Clinton, assembled explanations of what they meant and asked people to share it.

Pro-AMLO activists did even more, creating a manual that explained Mexico’s current economics and how the proposals of their candidate would, in their view, transform and improve Mexico’s economy.

Our analysis identified that one of the most effective strategies was taking time to explain the sociopolitical context. Citizens responded well to, and engaged with, specific reasoning about why they should back specific candidates.

As the U.S. midterm elections approach, it’s worth paying attention

to whether – and in what races – these methods reappear; and even how

people might use them to engage in fruitful political activism that

brings the changes they want to see. You can read more about our

research in our new ICWSM paper.

Activist Bots: Helpful But Missing Human Love?

Political bots are everywhere, swamping online conversations to promote a political candidate, sometimes even engaging in swiftboating…But, instead of continuing to build more political bots, what about creating bots for people, e.g., activists? What do bots for social change look like?

It might help us to first think about when might activists need bots?

Activists can suffer extreme dangers, including being murdered. Given that bots can remove responsibility from humans,

we could think of designing bots that execute and take responsibility

for tasks that are dangerous for human activists to do. At the end of

the day, what happens if you kill a bot?

Our interviews with activists have also highlighted that activists have to spend excessive time in recruitment, i.e., trying to convince people to join their cause. While obtaining new members is crucial to the long term survival of any activist groups, activists spend sometimes excessive time trying to convince people who at the end might never participate. Plus, it can be hard for humans to test and rapidly figure out what recruitment campaigns work best: is it better to have a solidarity campaign that reminds individuals of the importance of helping each other? Or is it more effective to just be upfront and directly ask for participation? The automation aspect of bots mean that we could use them to massively probe different recruitment campaigns, and not have humans spend too much time in these tedious tasks.

These ideas about how task automation could help activists lead us to design Botivist, a system that uses online bots to recruit humans for activism. The bots also allow activists to easily probe different recruit strategies.

We conducted a study on Botivist to understand the feasibility of using bots to convince people to participate in activism. In particular, we studied whether bots could recruit and make people contribute ideas about tackling corruption. We found that over 80 per cent of the calls to action made by Botivist’s automated activists received at least one response. However, we also found that the strategy the bots used did matter. We were surprised to discover that strategies that work well face-to-face were less effective when used by bots. Messages effective when done by humans resulted sometimes in circular discussions where people questioned whether bots should be involved in activism. Persuasive strategies resulted in general in less responses from people.

The individuals who decided to collaborate with Botivist were individuals already involved in online activism and marketing. They mentioned hashtags and Twitter accounts related to social causes and marketing analytics. It is likely that people linked Botivist to online marketing schemes. Therefore, those who responded to Botivist were the ones who in their communities already engage with such marketing agents, it was perhaps more natural for them.

To design bots for activists, it is necessary to understand first the communities in which the bots are being deployed. If we want to design bots that can take on some of the more dangerous activities of human activists, we have to first understand how people react when an automated agent conducts now the task. Will it be as effective as when done by a human? Many activists who endanger their lives making timely reports of terrorists or organized criminals are usually very empathic, caring, and have great solidarity with their public. Will it matter when these task are now done by an automated agent who by nature cannot care?

To read more about our system Botivist, checkout our CSCW 2016 research paper:Botivist: Calling Volunteers to Action Using Online Bots,

with Saiph Savage, Andres Monroy-Hernandez, Tobias Hollerer.

Points/talking bots: “Activist Bots: Helpful But Missing Human Love?” is a contribution to a weeklong workshop at Data & Society that was led by “Provocateur-in-Residence” Sam Woolley and brought together a group of experts to get a better grip on the questions that bots raise. More posts from workshop participants talking bots:

- How to Think About Bots by Samuel Woolley, danah boyd, Meredith Broussard, Madeleine Elish, Lainna Fader, Tim Hwang, Alexis Lloyd, Gilad Lotan, Luis Daniel Palacios, Allison Parrish, Gilad Rosner, Saiph Savage, and Samantha Shorey

- What is it like to be a bot? by Samantha Shorey

- Bots: A definition and some historical threads by Allison Parrish

- Our friends, the bots? by Alexis Lloyd

- On Paying Attention: How to Think about Bots as Social Actors by Madeleine Elish

- What is the Value of a Bot? by danah boyd

- Rise of the Peñabots by Luis Daniel Palacios

- A Brief Survey of Journalistic Twitter Bot Projects by Lainna Fader

‘Making Europe Great Again,’ Trump’s online supporters shift attention to the French election

The online movement that played a key role in getting Donald Trump elected president of the United States has begun to spread its political influence globally, starting with crossing the Atlantic Ocean.

Among several key elections happening in 2017 around Europe, few are as

hotly contested as the race to become the next president of France.

Having helped install their man in the White House in D.C., a group of online activists is now trying to get their far-right woman, Marine Le Pen, into the Élysée Palace in Paris.

In 2016, a group of online activists some might call trolls — people who engage online with the specific intent of causing trouble for others — joined forces on internet comment boards like 4chan and Reddit to promote Donald Trump’s candidacy for the White House. These online rebels embraced Trump’s conscious efforts to disrupt mainstream media coverage, normal politics and public discourse. His anti-establishment message resonated with the internet’s underground communities and inspired their members to act.

The effects of their collective work, for the media, the public and indeed the country, are still unfolding. But many of the same individuals who played important roles in the online effort for Trump are turning their attention to politics elsewhere. Their goal, one participant told Buzzfeed, is “to get far right, pro-Russian politicians elected worldwide,” perhaps with a secondary goal of heightening Western conflict with Muslim countries.

Our research has focused on studying political actors and citizen participation on social media. We used our experience to analyze 16 million comments on five separate Reddit boards (called “subreddits”). Our analysis suggests that some of the same people who played significant roles in a key pro-Trump subreddit are sharing their experience with their French counterparts, who support the nationalist anti-immigrant candidate Le Pen.

Finding Trump backers active in European efforts

The so-called “alt-right” movement, an offshoot of conservatism mixing racism, white nationalism and populism, is fed in part by online trolls, who use 4chan message boards and the Discord messaging app to create thousands of memes — images combining photographs and text commentary — related to political causes they want to promote.

As Buzzfeed reported, they test political images on Reddit to see which get the most attention and biggest reactions, before sending them out into the wider world on Facebook, Twitter and other social media platforms. However, we weren’t clear about how much this actually happened.

We

set out to quantify exactly what was happening, how often, and how many

people were involved. We started with the subreddit “The_Donald,” one

of the largest pro-Trump hubs, and analyzed the activity of every Reddit

username that had ever commented in that subreddit from its start in

2015 until February 2017. We looked specifically for those same

usernames’ appearances in European-related “sister subreddits” — as recognized by “The_Donald” users themselves.

We found that of the more than 380,000 active Reddit users in “The_Donald,” over 3,000 of them had indeed participated in one or more of the “sister subreddits” supporting right-wing candidates in European elections, “Le_Pen,” “The_Europe,” “The_Hofer” and “The_Wilders.” The first two had the most involvement from people also active in “The_Donald.” This is admittedly a small percentage of participants, but it shows that there is overlap, and that the knowledge and techniques used to support Trump are making their way to Europe.

What are they up to?

Next

we looked at how involved these Trump-supporting users were in the

European right-wing discussions, based on how many comments a user made

in any of the subreddits. Most users were moderately active, as might be

expected of casual users exploring issues of personal interest. But we

identified several accounts with behavior that suggested they might be

actively organizing ultra-right collective action in the U.S. and

Europe.

There were three types of these users: People who were actively involved in European efforts, bots making automated posts and people concerned about global influences.

The activists

Two outlier accounts in particular were what we called “Ultra-Right Activists.” They commented heavily on “The_Donald” and the four European subreddits — one of the outliers had more than 2,500 comments in “The_Donald” and over 1,000 comments in the “Le_Pen.” The other outlier had over 1,000 comments on “The_Donald” and over 1,000 across the European subreddits.

These accounts actively called people to action in both the U.S. and European subreddits. For instance, one post in “Le_Pen” recruited people to make memes: “Participate in the Discord chat to help us make memes.” Another post sought to organize Americans and Europeans to work together to create propaganda that would be effective in France: “We still have to explain to the Anglos some things about French politics and candidates so that they can understand. We must translate/transpose into the French context the memes that worked well in the U.S.”

We also found plans of flooding Facebook and Twitter with ultra-right content: “Yep, the media call them ‘la fachosphère’ (because we’re obviously literal fascists, right), and it dominates Twitter. That’s a great potential we have there. Soon I’m making an official account to retweet all the subs’ best content to them and make it spread.”

Not all of their efforts were necessarily successful. For example, an effort to transfer Trump’s main campaign slogan to Europe never really got going.

One comment we found on “The_Donald” appeared to lay out a game plan: “PHASE 1: MAGA (Make America Great Again) PHASE 2: MEGA(Make Europe Great Again).” Another sounded a similar theme: “Once we get the ball rolling here we will Make Europe Great Again. Steve Bannon has already been deployed to help Marine Le Pen, we haven’t forgotten about Europe.”

But we found only 210 comments mentioning “Make Europe Great Again” across the four European subreddits. While people on “The_Donald” seemed excited about spreading the phrase, Europeans didn’t go for it. Maybe the fact that the phrase is in English didn’t click well with Europeans.

The bots

This group involved accounts who were moderately involved in both “The_Donald” and the European subreddits. While many of them were undoubtedly real people, some accounts in this group behaved like bots, posting the same comment repeatedly, or even including the word “bot” in their account names.

Just as we don’t know the real identities or locations of the humans who posted, it’s not clear who might have been running the bots, or why. But these bot-type messages were posted in both The_Donald and the European subreddits. They seemed to be used as a way to create silly or fun collaborations between Americans and Europeans, and to spread an ultra-right-wing view of certain world events.

Some of the words most commonly used by people in this group were “news,” “fake” and “CNN.” People seemed to use those words to criticize traditional news media coverage of the ultra-right. However, some people also commented about possibly manipulating the big news channels to get coverage for Le Pen similar to Trump’s strategies:

“So we must get Le Pens (sic) name in the news every damn day. Just the (sic) like the MSM [mainstream media] couldn’t ignore Donald here, they will have to give her air time which will help her reach the disenfranchised.”The anti-globalists

A third group of accounts were highly active on “The_Donald” but far less so on the European subreddits we examined. When they did join the European discussions, it was usually to discuss how European and U.S. liberals were around the globe ruining everyone and everything. People in this cluster appeared to participate in the European subreddits primarily to emphasize the potentially negative actions that liberals had orchestrated.

With the French election still weeks away, any effects these people might be having remain unclear. But it’s worth watching, and seeing where these activists turn their attention next.

“Countering -Fake News in Natural Disasters via

Bots and Citizen Crowds”

By Tonmona Roy

On September 20, 2017, Mexico City was hit with a 7.1 magnitude earthquake, killing hundreds of people. The death toll started rising quickly, with people trapped under the debris of the fallen buildings. When there is a catastrophe of this magnitude, it is hard for the government to quickly assist everyone. Many started using social media to spread news about trapped people and supplies needed. Among the social media platforms, Twitter became the main site for exchanging information and mobilizing citizens for action. People started using hashtags to learn about what was happening in their neighborhoods and direct actions they could take to help. Some of the most popular hashtags used were #AquiNecesitamos (#HereWeNeed), #Verificado19S (#Verified19S, [19S represents September 19th, the day of the earthquake]). With these hashtags people started to post what they needed and where to deliver it.

But Dr. Elena Orozco, her friends and family all suddenly started reporting on social media:

“…Elena Orozco is not trapped in any building. She is right here with us. She was trying to rescue her co-workers, who were the ones trapped in the building. We are actually still missing Erik Gaona Garnica who decided to go back into the building to get his computer…”

Systems for Countering-Fake News Stories

Given that Fake News was critically affecting the rescue and well being of people we decided to do something about it. We quickly realized that Codeando Mexico (a social good startup) and universities across Mexico, such as UNAM, were organizing crowds of citizens to build civic media to help the earthquake. Our research lab (The HCI lab at West Virginia University) thus decided to unite forces and in a weekend we had rapidly built together a large scale system to counter fake news and bring verified news about the earthquake.This led us to decide to bootstrap on existing social networks of people to solve the cold-start problem. Through our investigations, we identified that citizens had put together a Google Spreadsheet where they were posting news reports about the earthquake that were 100% verified (they had a group of people on the ground who actively verified each news report.) The group would then manually post on their social media accounts the verified news from the spreadsheet. But, as the group became more popular, it was hard for the volunteers to spend more time on it and coordinate.

Bootstrapping Bots on Networks of Volunteers

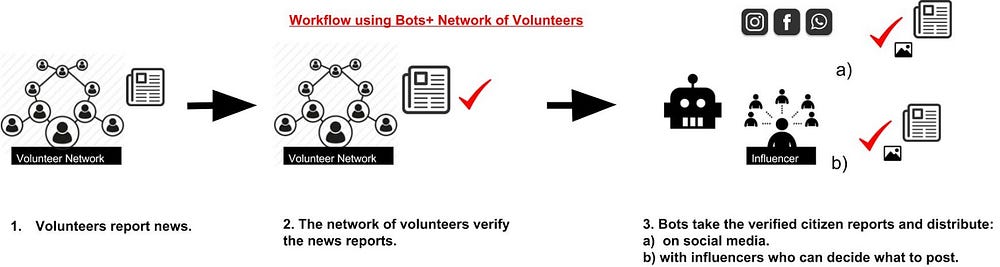

Our second design focuses on automating some of the critical bottlenecks that these networks of volunteers experienced when verifying news. In our interviews, we identified that it was difficult for volunteers to differentiate fake and real news because it involved gathering all of the facts behind the story; and it was also a pain to share on social media the news. Our second platform therefore introduced the idea of leveraging citizen crowds and bots (such as our bot @FakeSismo). Bots help in the verification of news by gathering facts and then massively sharing the verified news stories on social media, along with an automatically generated image macro that helps to give more visibility to the story. In this way, human volunteers can focus more on verifying the information. The work flow of our system is as follows:

Bots in Action

To test out our bot, it started by tweeting verified information about the resources needed and it got very good responses. The bot currently has 176 followers and it’s increasing.We also saw that citizens tried to actively verify news reports along with the bot.

In short, our bot is working together with a group of enthusiastic volunteers and helping in gathering and distributing verified information. As we test out more of the bot, we hope to connect with a larger mass of people to start a platform that can counter-fake news during natural disasters.

Social Media, Civic Engagement, and the Slacktivism Hypothesis: Lessons from Mexico's "El Bronco''

Does social media use have a positive or negative impact on civic engagement? The cynical "slacktivism hypothesis'' holds that if citizens use social media for political conversation, those conversations will be fleeting and vapid. Most attempts to answer this question involve public opinion data from the United States, so we offer an examination of an important case from Mexico, where an independent candidate used social media to communicate with the public and eschewed traditional media outlets. He won the race for state governor, defeating candidates from traditional parties and triggering sustained public engagement well beyond election day. In our investigation, we analyze over 750,000 posts, comments, and replies over three years of conversations on the public Facebook page of "El Bronco.'' We analyze how rhythms of political communication between the candidate and users evolved over time and demonstrate that social media can be used to sustain a large quantity of civic exchanges about public life well beyond a particular political event.Read more about our research: here

Spanish Version of Paper: here